Data Center Trade Offs

We Need AI, Data Centers, Water, Power, Cooling: How Are We Balancing It All?

VOLUME 1 - ISSUE 20 ~ February 27, 2026

In this edition of the “CIO Two Cents” newsletter, I pull together the various angles of data center topics that have been front and center for several years now, including rising chip density and resource demands are reshaping the way we think about energy, water, and cooling.

— Yvette Kanouff, partner at JC2 Ventures

The JC2 Ventures team: (John J. Chambers, Shannon Pina, John T. Chambers, me, and Pankaj Patel)

(1)

AI-driven data centers are scaling faster than the infrastructure designed to support them.

(2)

Higher chip density is accelerating the shift from traditional air cooling to advanced liquid and closed-loop systems.

(3)

Water and energy commitments must balance global ambition with measurable local impact.

I find current data center discussions fascinating. In today’s world, data centers are essential, and we need to expand them but seem overwhelmed with discussions of power, water, cooling, space, and other constraints. I thought it might make sense to bring these tradeoffs together in a blog post that lays them out clearly.

History

First, a tiny bit of history. As we all know, data centers used to be mostly air-cooled. For those of us in the equipment space, we worked a lot with fans and ensured sufficient open space to allow air cooling. We used electricity in our data centers, and water usage was low.

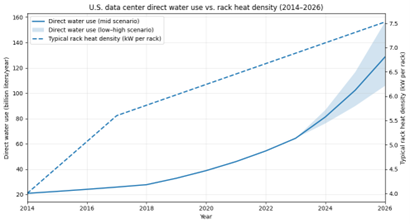

Around 2019 or so, we began using evaporative cooling, where water evaporation cooled the air. It was energy-efficient but at the cost of significantly higher water usage, which sparked concern. By 2023, US data centers alone consumed an estimated 17.4 billion gallons of water annually for cooling, and a whopping 211 billion gallons if we include electricity generation.

Current research is projecting that annual data center water usage will grow to between 40 to 74 billion gallons by 2028. Additionally, the Department of Energy has noted that US data center energy consumption could triple, potentially reaching 12% of total US electricity consumption within the same timeframe.

Cooling Options

I recall looking at liquid cooling in the 2010s as a potential option for equipment design. Although not mainstream at the time, this solution has since become far more prevalent. In immersion cooling systems, servers, routers, switches, etc. are submerged in dielectric liquid, reducing water usage by almost 95%. It’s not without criticism, specifically maintenance complexity, risk of leakage, and cost. That said, it is expected to have significant adoption for future AI workloads.

Another actively discussed solution is glycol systems, which eliminate evaporative loss of water. These systems are also mature and increasingly deployed in AI-driven data centers, providing significant improvements in energy efficiency and, of course, water conservation. Glycol systems are also not without concerns, specifically initial deployment costs, maintenance costs/complexity, and potential leak risks.

Data center giants are making ambitious water usage pledges, including net-positive ones. As with everything, these face a significant amount of skepticism due to lack of standardized frameworks for measuring such pledges, and ongoing debate over how water quality should be assessed. Additionally, global water replenishment commitments might not adequately address localized water stress in the communities where data centers operate.

A final area of discussion that I will mention is recycled water. On the surface, using ‘old’, treated water seems like an elegant solution. Yet concerns remain about the quality of such water leading to scaling and corrosion of infrastructure. The EPA notes that mineral-heavy data center water requires aggressive treatment. Most recycled data center water can only be recycled a finite amount. Brining data center recycled water to drinking level standards would increase the cost of recycling by 2-4 times.

Chips

While we are patting ourselves on the back for water-usage options, I should mention what is happening in parallel with chips. As I’ve talked about many times in the past, 3D chips are stacking billions of transistors, raising the power density from 4-5kW per rack a decade ago to over 100kW (even 200kW) for a modern AI-centric rack. The additional density and heat makes traditional air-cooling methods inefficient, leading to liquid cooling solutions.

You may remember TSMC touting 3D chips and expectations for 1+ trillion transistor GPUs back in 2024. According to some projections, CPUs could reach 300 billion transistors by 2039, with GPUs exceeding 1.5 trillion transistors.

What Now?

This month Oracle has released several articles and blogs on the AI data centers it is building today in New Mexico, Michigan, Wisconsin, and Texas, using closed-loop, non-evaporative designs for cooling, touting their efficiency and reliability. Microsoft and Google have both made pledges to replenish more water than they consume by 2030 and help improve water quality in their areas of operation. With some countries focusing on energy efficiency standards, denser chips generating more heat, and data centers looking at a redesign of water usage, this is certainly an area for us to continue to follow.

Image of the Moment

Created using ChatGPT; reviewed and edited by the author